Are self-driving cars a reality or just a sci-fi fantasy? The debate continues to intensify as skeptics question the feasibility of autonomous driving. A recent video interview between Dave Lee and James Douma provides deep insights into the inner workings of Tesla's 'V12' self-driving technology. Dave Lee, a tech-focused YouTuber, has built a reputation for his thorough technology reviews, while James Douma, a machine learning expert, brings a wealth of knowledge about AI and its potential.

Using this video as a guide, I'm exploring Tesla's 'V12' self-driving technology to understand its underlying principles and demystify how it works. My assessment suggests we're on the cusp of significant breakthroughs, but these might not align with our initial expectations. Let's dive in to explore the technology and uncover what the future holds.

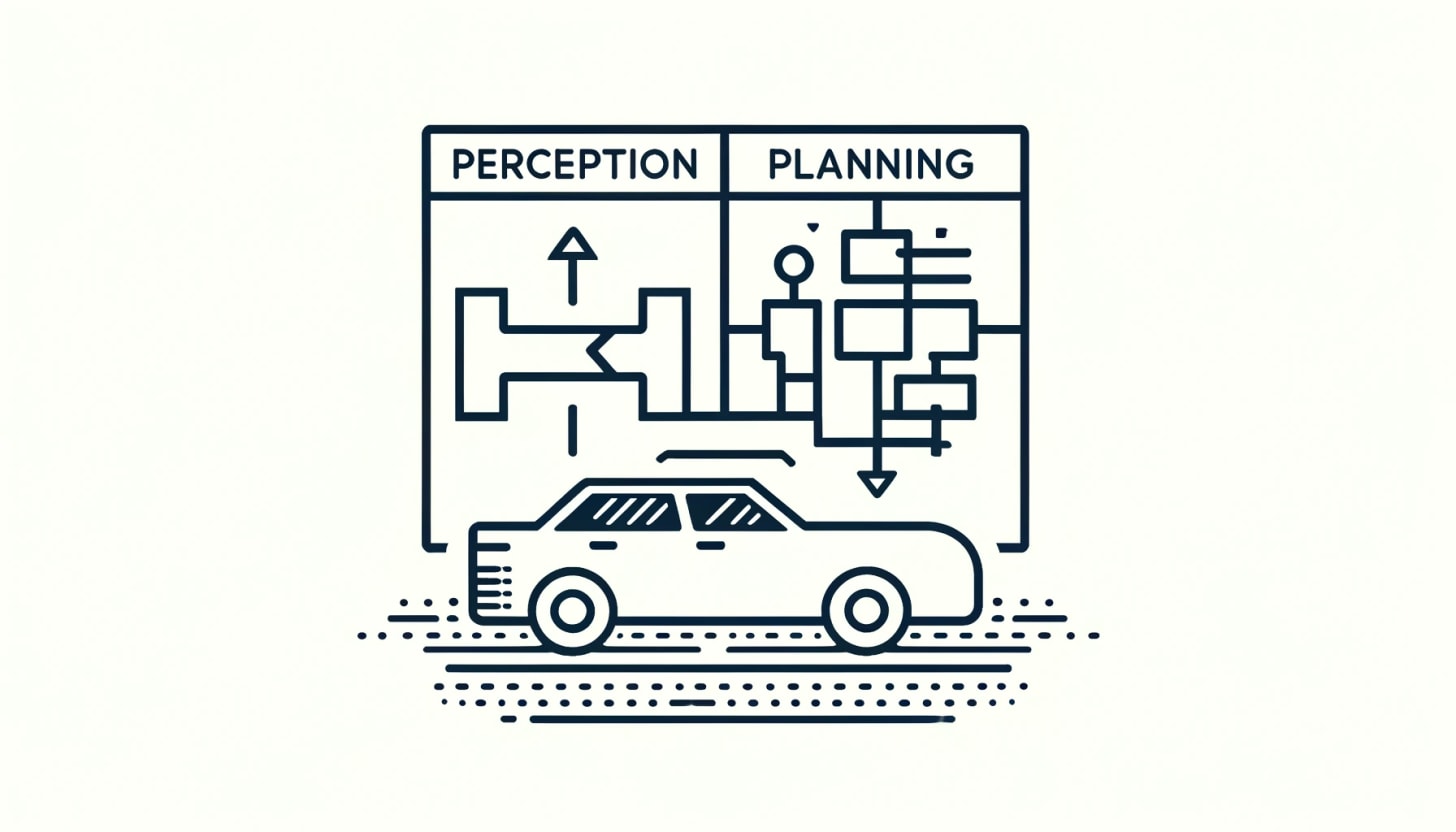

Self-Driving Architecture

A self-driving system has two main components: perception and planning. The perception system takes in raw data from cameras and other sensors to interpret key information, like the presence of other vehicles on the road. Neural networks are commonly used for this purpose due to their ability to process complex visual information. The planning system then translates this interpretation into commands that control the car's speed and direction. Traditionally, planning has relied on deterministic programming, with Tesla's Full Self Driving (FSD) Version 11 containing about 300,000 lines of C++ code.

In Version 12, Tesla has taken a significant step by using a neural network for planning as well. As Dave Lee describes it:

"V12 is a little bit of a misnomer because this is a drastic rewrite of their whole architecture approach. It seems like they kept a lot of their neural networks in the perception stack, but in their planning stack they're starting from scratch completely."

This shift raises questions about what kind of results neural networks will produce, while generating physical behavior and whether this new approach can match the safety standards we would like to see.

Behavioral Guardrails in Self-Driving Systems

Deterministic programming has long been considered a safety guardrail for self-driving systems, relying on a set of logical rules to make decisions. Removing 300,000 lines of C++ code from Tesla's planning system seems risky. However, James Douma offers a different perspective on the potential issues with heuristic systems, noting:

"Heuristic frameworks, built on a set of logical rules, tend to fail dramatically when they do fail. This is because if there's a logical flaw or an unanticipated scenario, the system can behave in ways completely contrary to what was intended."

Douma points out that human behavior in driving has evolved over time, with drivers developing reflexes and adapting to various traffic situations to ensure safety. As he elaborates:

"The system we have has co-evolved with drivers. We develop reflexes, learn to read traffic and the environment, and adapt our behaviors to maximize safety. […] The road system itself has been designed over many years to leverage human strengths and mitigate weaknesses."

This evolution allows for flexibility, where decisions can be adjusted as needed. The system doesn't require every single choice to be perfect, but rather relies on a chain of approximately correct decisions. Douma summarizes this concept:

"Human beings are constantly striving to maximize their safety margins to feel more comfortable and better understand their surroundings."

Tesla's bold move to rely on neural networks suggests a change in the underlying vision of driving, shifting from a logic-based approach to a behavior-based one. This raises the question: where does this behavior come from, and how can it be safely replicated in a self-driving system?

Mimicking Behavior

Tesla collects a massive amount of driving data from its cars, creating a vast database of driving videos. This data allows Tesla to train its neural networks by focusing on good driving behavior. As James Douma explains:

"In the context of human mimicry, the scoring system evaluates how closely the neural network's output aligns with a human's behavior. During training, we show the network a clip it has never seen before and ask, 'What would you do here?' We then rate the network based on how closely its response matches a human's actions in the same scenario."

This approach enables the system to mimic thousands of subtle behaviors that contribute to safe driving. Douma highlights how the planning system now learns from experienced drivers, performing small preparatory actions that give more time to react and enhance situational awareness.

However, mimicry can also lead to unexpected or even undesirable behaviors. For example, a Twitter/X post shows FSD V12 slowing down to examine an antique car — a move that makes sense for a human driver but seems odd for an automated system. In another example, FSD attempts to cut in line, raising concerns about whether this could lead to accidents.

These examples show that while FSD V12 can replicate curated human behavior, it isn't perfect and can reproduce unwanted habits. This invites critical thinking about how to manage these new possibilities, ensuring that the benefits of mimicry are maximized while minimizing risks.

Navigating the Shift to AI-Driven Cars

The common assumption is that an AI driver must be perfect, but human drivers are far from it. Some are highly skilled, while others get tired, distracted, or even drive under the influence. Thus, an AI driver that consistently matches competent human capability could already be significant. As James Douma explains:

“The decision-making doesn't have to be exceptional; the combination of its perception and its indefatigability—its inability to get tired—combined with good human-level decision-making creates a superhuman driver. As a near-term goal, that's excellent and would offer tremendous benefits.”

The aim for perfection is also challenged when considering supervised driving. An intervention, a disagreement between human and AI, doesn't always mean the human is right. Douma observes that with early Version 11, about 80% of interventions were justified. However, with Version 12, the numbers have flipped: about 80% of the time, the AI is correct, suggesting the system's growing capability might already exceed average human driving skills.

This shift brings us to a critical dilemma: How do we manage a self-driving system that may already outperform humans? Our entire framework for road transport is built around human drivers, including policies, laws, and expectations for behavior. We know how to address human errors, but the dynamics change when AI plays a larger role.

With Tesla's FSD V12, the responsibility for driving decisions moves from individuals to a large company. This centralized ownership of driving behavior raises broader questions about societal implications. While the safety benefits might be widely embraced, other aspects, like environmental impact, urban livability, and social fairness, will require careful alignment.

Although we are on the brink of a breakthrough in safety, this is just the beginning. The challenge ahead is to ensure that AI-driven cars serve the broader wellbeing of society, necessitating thoughtful policies and regulations to manage this transition effectively.

Beyond Driving: Broader Applications of Mimicry

The realization that Tesla's FSD V12 success is less about developing new driving principles and more about cleverly capturing and mimicking existing human behavior is eye-opening. It suggests that success in this domain relies not on the complexity of the task, but on how effectively we can record and replicate human actions.

While driving, a person is fully encapsulated by the machine. All inputs and outputs flow through the car's interface, with limited direct interaction between the human and the environment. Once a car is fully computerized and connected, and AI developers have legal access to this data, the preconditions for mimicry are met.

Many envision extending the principles of self-driving technology to humanoid robots or universal manufacturing robots. While this prospect is exciting, collecting data for these approaches presents significant challenges. Unlike cars, humans aren't inherently computerized, and there are valid privacy concerns about indiscriminate data collection. This suggests that a breakthrough in humanoid robots may be further away than we hope.

Instead, we could see breakthroughs in environments where humans are similarly encapsulated in computerized systems. Examples include airplane pilots and traffic controllers. DARPA's recent success with an autonomous jet fighter demonstrates the potential for mimicry in such fields, indicating that applications in these domains, though less "universal," can be profoundly impactful.

Given these broader applications, it's essential to consider the implications of mimicry and what it might mean for future automation and AI-driven systems. As we venture into these new territories, what challenges and opportunities lie ahead, and how can we ensure that this technology serves the broader good of society?

Conclusion

Tesla's 'V12' self-driving technology appears to be a significant breakthrough, primarily due to its approach of cleverly mimicking human behavior. Although it may not be immediately apparent that human behavior can be captured and replicated, once you understand this precondition, the system's workings become more comprehensible.

Given that human drivers are prone to errors, fatigue, and distractions, embracing AI drivers has clear benefits, especially for road safety. However, this is just the beginning. The rise of AI-driven cars represents a major shift in our road transport system, with potentially enormous societal consequences. These implications need careful thought and will require adaptive government policies to ensure safety and fairness.

Additionally, this breakthrough sheds light on the nature of AI. While many hoped for a powerful and universal thinking machine, what we see is the clever replication of pre-existing behavior. This shifts our expectations for AI. Applications like humanoid robots may be further off than anticipated, but other possibilities, like fully autonomous fighter jets, are equally significant and possibly alarming.

As we move forward, it's crucial to think critically about the broader impact of these technologies. How will they reshape our society, and what measures should be taken to ensure they align with our values and priorities? The future of AI holds great promise, but it also demands careful consideration to navigate the risks and opportunities ahead.